The History of Video Cameras

The Video Camera – From Innovation to Ubiquity

Today, the video camera is a tool that is so deeply woven into everyday life that it seems strange to find a person without one. Whether on a smartphone, tablet, laptop, or other device, most of us carry video technology and seldom think about it. However, when one stops to consider the history of this device’s development, one develops a new respect for the advances in the technology of motion photography.

It is important to differentiate the video camera from a “movie” camera, which is a motion picture camera that utilizes photographic film to record images. Film cameras have a long and varied history dating back to the late 19th century. Modern cameras utilize video technology rather than film. This is an electronic format that has historically been stored on various media such as magnetic tape, CCD chips and solid state flash memory.

Television and the Birth of Video

The video camera was initially developed for use in broadcast media. In the early 1900s, the first experiments in image transmission were completed. A Scottish engineer named John Logie Baird contributed to this work with a variation of an older device known as a “Nipkow disk,” a mechanical device that breaks an image into “scanlines” using a rotating disc with holes cut into it. Early televisions produced mechanical video images, but by the 1930s, new all-electronic designs based on a cathode-ray video camera tube, including two prominent versions by engineers Philo Farnsworth and Vladimir Zsworykin, replaced the mechanical variations with electron scanning technology. This system, which was created for broadcasting, became the standard in the television industry and remained in wide use until the 1980s.

The video camera was initially developed for use in broadcast media. In the early 1900s, the first experiments in image transmission were completed. A Scottish engineer named John Logie Baird contributed to this work with a variation of an older device known as a “Nipkow disk,” a mechanical device that breaks an image into “scanlines” using a rotating disc with holes cut into it. Early televisions produced mechanical video images, but by the 1930s, new all-electronic designs based on a cathode-ray video camera tube, including two prominent versions by engineers Philo Farnsworth and Vladimir Zsworykin, replaced the mechanical variations with electron scanning technology. This system, which was created for broadcasting, became the standard in the television industry and remained in wide use until the 1980s.

Cathode ray tubes were suitable for broadcast purposes, but the question of a medium for recording was a challenge. The obvious choice was magnetic tape, a technology that was already in use for sound recording. However, figuring out how to contain the tape remained elusive throughout the mid-20th century because it held a higher volume of information. Two companies, JVC and Panasonic, introduced a great advancement in video recording when they developed the first self-contained large format video cassette tapes in the 1970s. This invention came later to consumer prominence in the 1980s via the video camcorder, a device that brought video recording into the mainstream.

The Transition to Digital

The transition from analog to digital video capture began in 1981 with the development of the Sony Mavica single-lens camera. This camera utilized a rotating magnetic disc, which was 2 inches in diameter and could record up to 50 still frames for playback or printing. Even though it is credited with starting the digital camera revolution, the Mavica was not a “true” digital camera as we understand them today, since images were still stored magnetically.

The transition from analog to digital video capture began in 1981 with the development of the Sony Mavica single-lens camera. This camera utilized a rotating magnetic disc, which was 2 inches in diameter and could record up to 50 still frames for playback or printing. Even though it is credited with starting the digital camera revolution, the Mavica was not a “true” digital camera as we understand them today, since images were still stored magnetically.

The Kodak company would bring the next innovations in digital camera technology by introducing a number of digital camera products in 1987 and the creation of the “Photo CD” in 1990. In 1991, Kodak released the first digital camera intended for professional use by photojournalists, followed by Nikon’s F-3, which included a 1.3-megapixel sensor. Digital image capture would make the leap from professional photography to consumer level in 1994 with the Apple QuickTake. This product which was the first of its kind, followed by similar digital camera products from Kodak, Casio, Sony, and others.

The first digital video camera to feature video compression was released in 1993 and was known as the Ampex DCT. This innovation soon saw a flood of digital video cameras from familiar companies including Sony, JVC, and Panasonic. As the successor to the camcorder, these cameras first recorded to optical disks and later to flash memory as time progressed.

Digital Video in Surveillance Technology

The first digital security cameras systems were used by the military for security and reconnaissance. In 1980, the same camera technology that brought soap operas to televisions nationwide also helped protect businesses against fraud and theft.

The first digital security cameras systems were used by the military for security and reconnaissance. In 1980, the same camera technology that brought soap operas to televisions nationwide also helped protect businesses against fraud and theft.

These systems typically used CCTV, closed circuit television, to allow companies to monitor their surroundings. While these security cameras themselves were strictly for image processing, recording was possible at the point of display via videocassettes of various formats. Video surveillance has grown since the 1980s, with the technology expanding to government use in the United Kingdom and home use for safety and security. The transition from analog to digital in the surveillance realm meant an increase in the quality of CCTV systems with resolutions up to 4K and innovations like infrared cameras with “night vision” and thermal imaging technologies.

The late 1990s also saw the introduction of DVR devices, digital video recorders that used hard disk drives and similar storage devices to store large amounts of digital video information in a single home setup. This innovation quickly found an audience in surveillance and in niche markets such as “paranormal investigations.” In fact, crews of television programs like “Ghost Hunters” exposed many consumers’ to DVR setups.

The late 1990s also saw the introduction of DVR devices, digital video recorders that used hard disk drives and similar storage devices to store large amounts of digital video information in a single home setup. This innovation quickly found an audience in surveillance and in niche markets such as “paranormal investigations.” In fact, crews of television programs like “Ghost Hunters” exposed many consumers’ to DVR setups.

Modern Digital Video Cameras

Professional video cameras have come a very long way since the introduction of the 1.4-megapixel digital camera. In the early 2000s, Sony developed the first high definition digital video cameras. Today, high-resolution digital video is no longer confined to the domain of the television studio. High-end professional digital video cameras are used for independent films, web series and hobbyist purposes.

In the hand-held realm, the familiar camcorder of the 1980s underwent a smoother evolution to digital. It wasn’t until 2003 that Sony introduced the first digital video camcorder that eliminated the need of tape entirely.

These hand-held digital video cameras have found use in projects from home movies to professional film. Celebrated horror director Michael Almereyda’s 1994 film, Nadja, notably uses an early digital video camera manufactured by Fisher-Price in certain sequences for its gritty, low-resolution effect. These hand-hend devices have also contributed greatly to live journalism, as well as journalism’s casual cousin, “vlogging,” which is live video blogging on websites like YouTube.

Ever Smaller, Everywhere: Webcams and Camera Phones

Even more influential on the realm of internet video is the webcam. These devices first appeared in the 1990s as consumer curiosities and for the purposes of information security at businesses. Since the first low quality webcams were introduced, they have become smaller and their quality has increased. Today, nearly every portable computing solution includes a webcam above the monitor. While smaller, faster, and higher resolution than their peripheral predecessors, webcams still suffer from a wide and notably unimpressive range of qualities. As such, their use is predominantly in the realm of hobbyist users either streaming live video via services like Facebook live or used by gamers on sites like Twitch in tandem with their PC’s own video display. This results in a live video stream of a video game inset with a live video stream of the player himself.

Even more influential on the realm of internet video is the webcam. These devices first appeared in the 1990s as consumer curiosities and for the purposes of information security at businesses. Since the first low quality webcams were introduced, they have become smaller and their quality has increased. Today, nearly every portable computing solution includes a webcam above the monitor. While smaller, faster, and higher resolution than their peripheral predecessors, webcams still suffer from a wide and notably unimpressive range of qualities. As such, their use is predominantly in the realm of hobbyist users either streaming live video via services like Facebook live or used by gamers on sites like Twitch in tandem with their PC’s own video display. This results in a live video stream of a video game inset with a live video stream of the player himself.

Just as webcams became smaller and more ubiquitous among our personal computers, camera phones also became commonplace in the late 1990s. Functionally, these cameras are not too different from earlier digital cameras and like home webcams, quality varies. Their primary use tends to be in everyday events as opposed to home movies, which tend to capture milestones such as birthdays, holidays, and vacations. Cell phone video is used to capture traffic stops, interesting people on the street and events such as accidents or impromptu performances. While some of these videos certainly find their way to sites like YouTube, more end up as bite-size pieces of video shared in real-time on Twitter, Facebook, SnapChat, and Instagram.

The Scientific Legacy of Digital Video

Digital video camera technology has become inseparable from our daily live, and is expanding to broader horizons. Tiny digital cameras are making great strides in the world of healthcare, as smaller cameras make endoscopic procedures far easier than they have been in the past. In fact, experiments in pill-sized, wireless cameras could make endoscopy as easy as taking an aspirin. In the realm of astronomy, the time-tested technology of CCD capture remains in widespread use, although now via digital channels rather than analog. These sensitive CCD chips capture individual photons of light via the excitation of electrons on the chip itself and pinpoint photons of very specific wavelengths, revealing objects like the ultraviolet and x-ray spectra that would be otherwise invisible among all the light and noise in our galaxy. Digital cameras have become our eyes on Mars and beyond. The Curiosity rover features no less than 17 digital video cameras capable of high quality photos and stereoscopic three-dimensional videography. New developments in robotics like Kuri utilize digital video together with artificial intelligence to create an in-home robotic companion that can see your home’s layout, learn its way around and recognize the faces of the humans around it.

Digital video camera technology has become inseparable from our daily live, and is expanding to broader horizons. Tiny digital cameras are making great strides in the world of healthcare, as smaller cameras make endoscopic procedures far easier than they have been in the past. In fact, experiments in pill-sized, wireless cameras could make endoscopy as easy as taking an aspirin. In the realm of astronomy, the time-tested technology of CCD capture remains in widespread use, although now via digital channels rather than analog. These sensitive CCD chips capture individual photons of light via the excitation of electrons on the chip itself and pinpoint photons of very specific wavelengths, revealing objects like the ultraviolet and x-ray spectra that would be otherwise invisible among all the light and noise in our galaxy. Digital cameras have become our eyes on Mars and beyond. The Curiosity rover features no less than 17 digital video cameras capable of high quality photos and stereoscopic three-dimensional videography. New developments in robotics like Kuri utilize digital video together with artificial intelligence to create an in-home robotic companion that can see your home’s layout, learn its way around and recognize the faces of the humans around it.

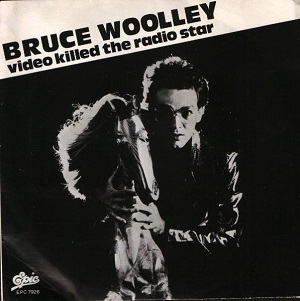

“Video Killed the Radio Star” - from Inception to Universality

In conclusion, the video camera has certainly come a very long way since its roots in the 19th century technology of the Nipkow disk. A mere experiment in engineering for the purposes of communication and the advancement of science has grown far beyond its own bounds to become something inseparable from daily life for all of us. When taken as a survey, we see that the history of the video camera is truly a history that has shaped the way each of us live our lives.

In conclusion, the video camera has certainly come a very long way since its roots in the 19th century technology of the Nipkow disk. A mere experiment in engineering for the purposes of communication and the advancement of science has grown far beyond its own bounds to become something inseparable from daily life for all of us. When taken as a survey, we see that the history of the video camera is truly a history that has shaped the way each of us live our lives.

Featured Image Credit: JosepMonter / Pixabay

In Post Image 2: By Morio Own work, CC BY-SA 3.0

In Post Image 4: Nachrichten_muc / Pixabay

In Post image 7: Fair use